Industry bodies and automation vendors are advancing cross-vendor machine vision standards into real-world manufacturing pilots, targeting longstanding interoperability barriers that have slowed AI-based inspection deployments on high-mix, high-variability production lines. The push accelerates as multiple sectors-including automotive, electronics, and metalworking-seek consistent defect detection performance across heterogeneous camera, edge device, and AI model configurations.

Background

The need for standardization has grown alongside the complexity of modern AI inspection deployments. As manufacturers adopt AI-enhanced vision systems, standards play a vital role in ensuring interoperability, integration ease, and long-term scalability. Without consistent frameworks, deploying machine vision solutions across various platforms, devices, and applications remains time-consuming, costly, and error-prone.

Organizations including the Association for Advancing Automation (A3), alongside global partners EMVA, JIIA, VDMA, and CMVU, have developed a suite of international machine vision standards through the G3 global standards group. These standards simplify integration, reduce costs, and accelerate adoption across manufacturing sectors. The GenICam standard-administered by the EMVA-provides a common software interface across camera types and transport protocols, and the GigE Vision Technical Committee approved GigE Vision 3.0 on April 17, 2026, according to A3.

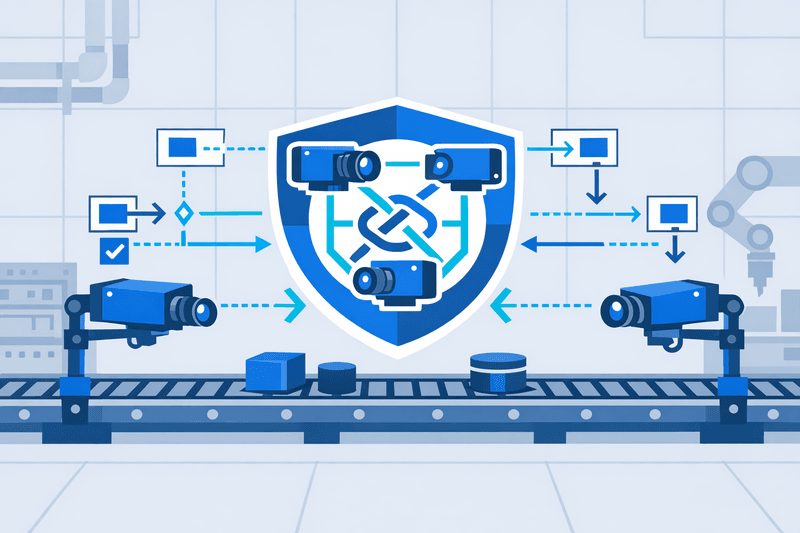

Phil-vision's contribution to the GenICam standard-the protocol enabling cameras from different manufacturers to communicate with vision software in a consistent, predictable way-positions the company at the foundation of modern machine vision interoperability. Standardized interfaces provide supply chain security, allowing users to switch or combine components from different manufacturers without redesigning entire systems.

Details

Despite established hardware-layer standards, the transition from controlled pilot to full production remains the central challenge for AI inspection deployments. Around 77% of AI vision implementations in manufacturing never advance beyond the pilot phase. Industry practitioners attribute the bottleneck less to hardware gaps than to system-level complexity. According to Karamat of aku.automation, "The high failure rate of AI vision pilots is, in our assessment, not primarily a technology problem. It is a complexity and ownership problem." Pilots typically succeed because they operate under controlled conditions with dedicated specialist attention; the step to production-across shifts, plants, varying operators, and legacy infrastructure-introduces a different order of challenge.

At the edge computing layer, fragmentation between AI model frameworks and hardware accelerators compounds the interoperability problem. Each neural processing unit (NPU) vendor typically provides its own tools, compilers, and runtime libraries, many tightly coupled to specific versions of the host operating system and model frameworks. Updating one component can break compatibility with others. Proprietary software stacks and chip architectures lead to vendor lock-in and reduced flexibility, while ecosystem fragmentation forces redundant development efforts as models must be adjusted for different hardware, lengthening design cycles and increasing deployment costs.

The industrial machine vision market was valued at $14.85 billion in 2025 and is projected to reach $25.79 billion by 2032, a compound annual growth rate of 8.2%, according to industry analysts. Over 65% of manufacturers now deploy vision-based tools to boost product accuracy and reduce manual errors, and around 58% of systems utilize AI-based recognition capabilities for precision defect detection.

On the software interoperability front, the ONNX (Open Neural Network Exchange) format has emerged as a cross-framework solution. ONNX Runtime operates on the standard ONNX format, providing a robust path to interoperability between different frameworks and hardware. Meanwhile, platform vendors are expanding multi-source support: at Hannover Messe 2026, Siemens announced significant expansions to its Industrial Edge ecosystem, including new partner solutions for machine vision and quality inspection alongside IEC 62443-4-2-certified cybersecurity functions.

AI visual inspection systems for quality control deliver 99%+ accuracy compared to manual inspection's 85%, yet most implementations fail within 18 months. Failed pilots waste $200,000 to $500,000 in capital and 6 to 12 months of engineering time.

Outlook

The edge AI market is demonstrating steady growth. According to IDC's Edge AI Processor and Accelerator Forecast, edge processors and accelerators will become a $52 billion market with a five-year CAGR of 16.1% by 2029. Standards bodies are expected to continue accelerating release cycles: the EMVA has scheduled the next GenICam working group meeting for October 2026 as part of the IVSM Fall session in Ottawa, Canada. Technology providers-from semiconductor vendors to software developers and system integrators-must collaborate to reduce fragmentation, accelerate time-to-market, and lower total cost of ownership as the industry shifts toward distributed AI.