AI-driven machine vision is moving from pilot programs into full-scale production at metal fabricators handling high-variety part runs. The shift is driven by advances in AI-guided robotics, tighter MES and ERP integration, and a widening gap between what rule-based automation can handle and what modern mixed-production environments demand.

Background

High-mix, low-volume (HMLV) fabrication has historically resisted automation because traditional fixed systems require extensive reprogramming and fixturing whenever part geometry changes. Collaborative robots and AI-powered vision systems address this limitation, offering flexibility and faster programming suited to HMLV environments. The pressure to automate has intensified: the biggest operational shift in fabrication in 2025 is not AI replacing operators but the widespread adoption of smarter automation, improved sensing, and better production data visibility.

The market underpinning this transition is expanding rapidly. The machine vision systems market was valued at USD 20.4 billion in 2024 and is projected to reach USD 41.7 billion by 2030, growing at a compound annual growth rate of 13%, according to industry analysis. Separately, the global robotic welding market is expected to grow from USD 10.38 billion in 2025 to USD 16.87 billion by 2030, at a CAGR of 10.2%.

Details

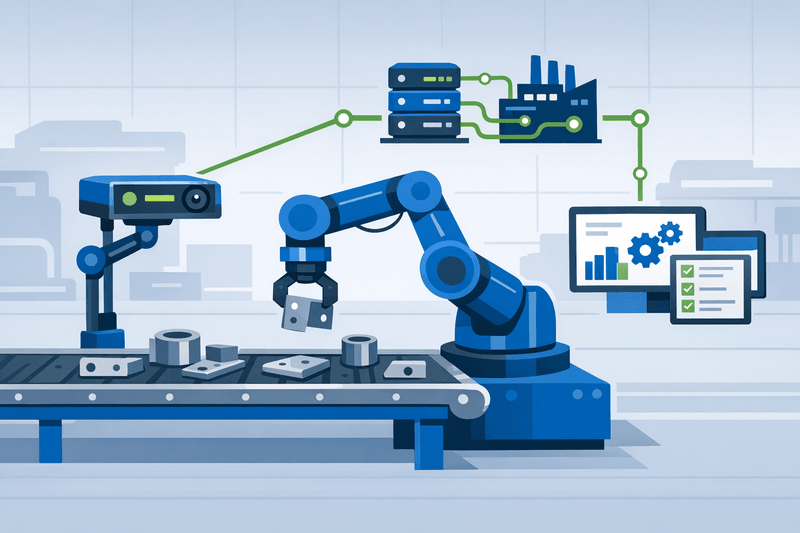

Real-world deployments illustrate what the transition looks like on the shop floor. Through its Teqram subsidiary, Tollenaar Industries has developed vision-based automation systems tailored for custom fabricators and other high-product-mix operations. A common thread runs through nearly all of the company's projects: no manual programming or teach pendants. Instead, AI-powered vision technology guides automation. Cells installed at Accurate Metals Illinois in Rockford, Ill., and at Tosec BV in the Netherlands automate grinding, deslagging, and part sorting without specialized fixtures or per-part programming.

The most consequential question in AI vision is not whether systems can detect defects-competently built systems can. The question is whether they can determine why defects occur, trace them to upstream process variables, and initiate corrections before the next component leaves the station. That gap between detection and prevention is where much current development is focused. "Today, most AI vision in manufacturing is reactive," said Zeeshan Karamat, CTO and co-founder of 36ZERO Vision. "A defect is identified, flagged, and a human investigates. The defect has already occurred, and the underlying cause remains unaddressed until manual analysis is completed."

On integration, the architecture challenge is substantial. A vision system cannot exist in isolation; it must connect with production equipment, conveyors, reject mechanisms, PLCs, HMIs, and higher-level Manufacturing Execution Systems. Industrial protocols such as EtherNet/IP, PROFINET, and OPC UA handle this communication, and clean integration with PLCs and SCADA systems is essential for production deployment. The OPC Foundation and VDMA Machine Vision have published a companion specification addressing this gap: the OPC UA companion specification for machine vision aims at straightforward integration of machine vision systems into production control and IT systems, establishing a standardized information model that spans machine PLCs, line PLCs, MES, ERP, and cloud environments. Feeding vision-system data into ERP applications allows users to access inspection results in familiar formats. By adopting OPC UA, manufacturers gain interoperability, real-time monitoring, secure data exchange, and greater scalability.

Economic thresholds matter as much as technical capability. Collaborative robot deployments typically achieve payback within 12 to 24 months, while IoT-based MES implementations show payback periods of 6 to 14 months, according to industry benchmarks. The full business value of MES is realized only through bidirectional ERP integration-ERP sends production orders and BOMs to MES, while MES returns actual quantities, real cycle times, and quality results. This feedback loop corrects cost-of-goods inaccuracies of 15-30% that arise when ERP relies on standard times. Advanced vision systems deliver measurable quality gains as well: machine vision reduces inspection errors by over 90% and defect rates by up to 95%. Unlike human inspectors, these systems operate continuously without fatigue, accelerating cycle times and providing real-time data, with some systems capable of inspecting up to 2,400 parts per minute.

Workforce transition accompanies the technical shift. Manufacturing roles increasingly blend hands-on fabrication skills with digital capabilities. Operators now program, monitor, and optimize advanced equipment while collaborating with automated systems. Successful AI adoption depends as much on people as on technology-operators, engineers, and planners need visibility into and trust in AI-driven decisions. Industry practitioners recommend designating plant-level "AI champions" who bridge IT and OT teams and serve as change agents during rollout.

Outlook

As demand shifts toward flexible production, mobile and modular robotic solutions will enable quick redeployment across workstations, supporting high-mix manufacturing. For fabricators considering staged rollouts, practitioners advise starting with a single vision-guided cell in the shop's highest-variability area and validating calibration accuracy and MES data integrity before extending to broader cell configurations. Industry guidance recommends prioritizing projects with ROI under 12 months and continuously tracking ROI post-deployment to build organizational confidence for subsequent capital commitments.