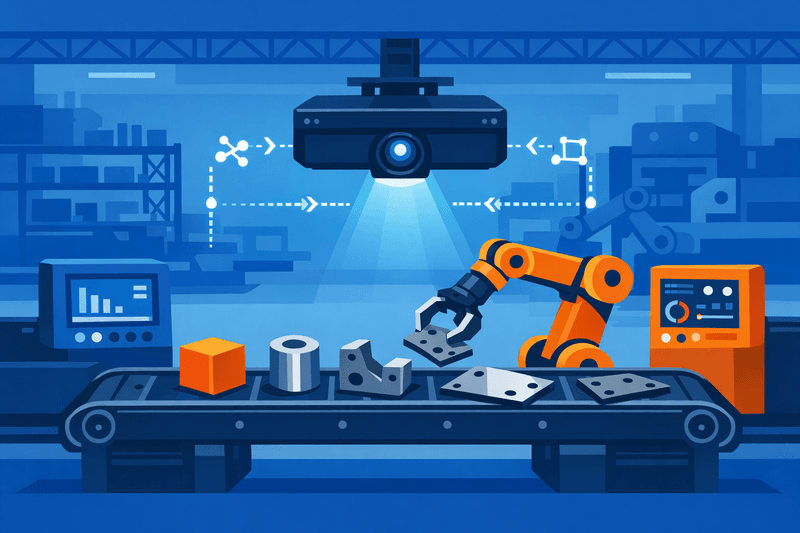

Machine vision systems powered by artificial intelligence are completing their transition from controlled pilot programs to full-scale production deployment across high-mix metal fabrication shops, driven by converging cross-vendor data standards, modular architectures, and falling integration costs. The shift marks a decisive inflection point for custom fabricators handling diverse part geometries, where fixed-tooling automation has historically been impractical and quality consistency has depended on skilled labor that is increasingly difficult to source.

Background

High-mix, low-volume fabrication has long resisted broad automation because the economics of traditional robotic cells - extensive teach-pendant programming, dedicated fixtures, and single-vendor ecosystems - rarely justified the investment when batch sizes are small and geometries vary continuously. That constraint is dissolving as AI-driven vision systems eliminate manual path programming. Tollenaar Industries' Teqram subsidiary initially deployed AI-guided grinding, sorting, and finishing cells at its own fabrication operations in the Netherlands and Germany before expanding the technology to contract shops in the United States, including Accurate Metals Illinois in Rockford, according to reporting by The Fabricator. The model - validate in-house, then commercialize - is now a common pilot-to-production template across the sector.

A parallel structural shift is occurring in the standards layer underpinning these systems. ROS 1 Noetic, the final distribution of the original Robot Operating System, reached end-of-life in May 2025, according to industry sources, accelerating migration to ROS 2 across new industrial deployments. At the same time, OPC UA - the manufacturer-independent communication standard for industrial automation - is gaining traction as the preferred data exchange layer connecting vision systems, robot controllers, and PLCs across multi-vendor cells. All major industrial robot manufacturers, including ABB, FANUC, KUKA, Universal Robots, and Yaskawa, now offer ROS 2 drivers, enabling multi-vendor robot fleets to be coordinated through a single software framework rather than parallel proprietary systems, according to automation integrators.

Details

The interoperability achieved through OPC UA and ROS 2 is directly reshaping changeover economics in high-mix environments. OPC UA supports complex data structures, method calls, and high interoperability, allowing machine vision solutions to be flexibly integrated into existing production environments, according to Basler, a machine vision hardware provider. Interfaces including REST APIs, gRPC, and EtherCAT bridges complement these protocols in brownfield installations, allowing vision-guided cells to connect to legacy PLCs without full infrastructure replacement.

On the inspection side, AI-driven defect detection systems now demonstrate measurable performance gains over legacy rule-based automated optical inspection (AOI). AI systems achieve 97-99% detection accuracy versus roughly 50% false positives characteristic of legacy systems, and false positive rates drop to approximately 4-10% with AI, dramatically reducing manual inspection workload, according to industry analyses. Industrial robot perception patent filings surged 44% in 2025 alone, with 228 confirmed filings that year compared to 70 in 2024, based on an analysis of 517 patents filed across 2017-2026, according to PatSnap's innovation intelligence platform - a signal that laboratory advances are translating into commercially deployable systems at scale.

The transition from pilot to production nonetheless exposes integration risk. Organizations frequently underestimate the complexity of moving from pilot projects to full deployment; while proof-of-concept experiments may demonstrate promising results, scaling solutions across multiple production lines introduces additional challenges around variable lighting, changing part designs, and fluctuating line speeds, according to industry analyses. Fabricators adopting staggered rollouts mitigate this risk by sequencing cell deployments so active learning pipelines can accumulate production-representative training data before expansion. AI defect detection models require as few as 20-40 labeled images per defect class to begin training, lowering the data barrier for shops introducing new part families.

For small-to-medium enterprises, budget constraints remain the primary adoption barrier. Vendors are increasingly offering software-as-a-service models and managed services to expand their customer base in this segment, according to market research. The global vision-guided robotics software market reached USD 2.34 billion in 2024 and is projected to grow at a CAGR of 12.7% through 2033, reaching USD 6.91 billion, according to market data.

Data governance is also surfacing as an operational concern at scale. As vision systems generate continuous inspection telemetry - frame captures, defect classifications, process timestamps, and operator review records - fabricators face questions around on-premise versus cloud storage, audit trail integrity, and model version control. Edge inference architectures, which run vision models locally without cloud dependency, remain the dominant approach for latency-sensitive inspection tasks in metal fabrication environments.

Outlook

Standards bodies and industry consortia are expected to publish updated OPC UA Companion Specifications for robotic vision applications in the coming months, further reducing integration friction for cross-vendor cells. Fabricators planning multi-cell or multi-facility rollouts are increasingly adopting modular architectures that standardize on interoperable calibration and inference components, allowing model updates to be deployed without hardware rework. As AI model accuracy continues to improve through active learning feedback loops, the performance gap between pilot-cell results and full-production deployments is narrowing - reducing the risk threshold that has historically slowed SME adoption beyond initial proof-of-concept stages.