Fewer than one in three AI inspection pilots ever reach full production deployment. The technology itself is rarely the barrier. What stalls rollouts is integration complexity: proprietary data formats, disconnected OT and IT systems, opaque model governance, and the prohibitive cost of re-engineering every production line from scratch. A coordinated push for cross-vendor machine vision standards now addresses each of those friction points - and its effects are being felt across metal fabrication, plastics processing, and consumer goods assembly simultaneously.

Why Pilots Stall at the Line's Edge

The industrial machine vision market was valued at $14.85 billion in 2025 and is projected to reach $25.79 billion by 2032 at an 8.2% CAGR, according to industry analysis1industry analysis. Yet market growth has consistently outpaced deployment depth: over 65% of manufacturers now deploy some form of vision-based inspection tool, but most remain confined to one or two stations rather than plant-wide systems.

The root cause is integration debt. Traditional approaches2Traditional approaches require custom drivers for each PLC brand, bespoke connectors for MES platforms, and separate telemetry pipelines per vendor. Each inspection station becomes an island. When a manufacturer attempts to scale - adding lines, sites, or new product families - integration cost multiplies rather than amortizes. Without consistent data schemas, a defect signal captured at a stamping press cannot be automatically correlated with weld parameters captured three stations downstream.

Compounding the problem, AI models deployed in production are difficult to audit. Procurement teams and quality engineers lack visibility into which datasets trained the model, what version is running, or how to trace a false-accept back to a model component. As regulatory expectations tighten across jurisdictions, that opacity creates compliance exposure.

The Standards Stack Enabling Full-Scale Deployment

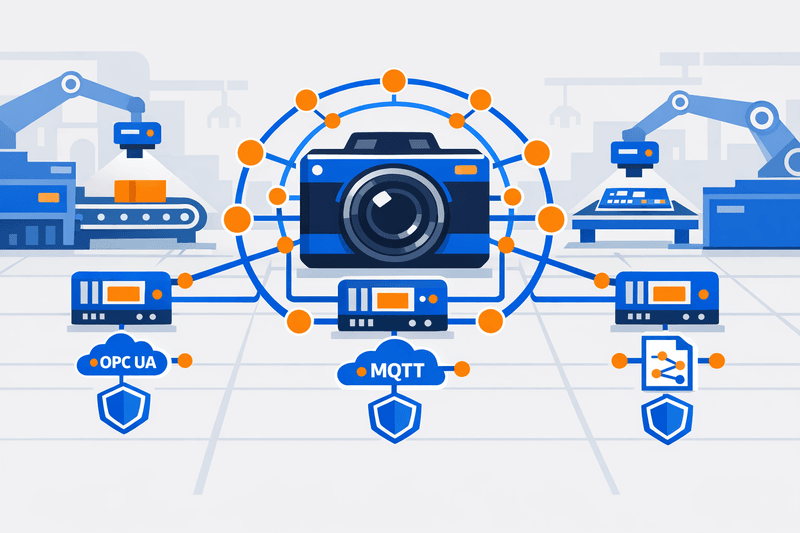

Three interoperability standards are converging to remove these barriers. Together they form a deployable infrastructure layer that makes AI vision systems vendor-agnostic, auditable, and replicable across lines.

OPC UA (Open Platform Communications Unified Architecture) is now the de facto standard3now the de facto standard for structured data exchange between machines, PLCs, SCADA, MES, and ERP systems. OPC UA now has over 850 registered members and more than 100 companion specifications covering device types across industries, according to iFactory4iFactory. Vision sensors and inline gauges can publish inspection results - defect classifications, confidence scores, part IDs - as semantically rich OPC UA nodes that any compliant system consumes without custom parsing. OPC UA Companion Specifications5OPC UA Companion Specifications extend this model specifically to machine vision and surface inspection equipment, meaning a camera system from one vendor can expose its outputs in the same structure as a competing system.

MQTT with Unified Namespace (UNS) handles the high-velocity telemetry layer. Where OPC UA provides the semantic richness needed for plant-floor machine-to-machine communication, MQTT's lightweight publish-subscribe model carries that data efficiently from edge devices to cloud analytics and AI inferencing platforms. OPC UA over MQTT3now the de facto standard is now supported by every major cloud provider and PLC vendor. A standardized topic hierarchy - for example, site/line/station/sensor - allows inspection results from a metal fabrication press to flow through the same broker infrastructure as results from a plastics injection line, with no per-line reconfiguration.

Software Bill of Materials (SBOM) for AI systems addresses the governance gap. CISA, in collaboration with NSA and 19 international partners, released joint guidance in September 2025 outlining a shared vision for SBOM implementation in cybersecurity and industrial software, per CISA's official release6CISA's official release. In the AI inspection context, an AI-BOM extends the SBOM concept to machine learning components: it inventories the datasets, model weights, training configurations, and version history7inventories the datasets, model weights, training configurations, and version history that define how a vision model behaves. When a model is retrained on new defect examples, the AI-BOM updates accordingly, giving quality engineers and auditors a clear chain of custody. The EU Cyber Resilience Act, which entered into force in 2024, phases in SBOM obligations for manufacturers8phasing in SBOM obligations for manufacturers of connected industrial equipment - accelerating adoption beyond voluntary compliance.

The table below contrasts deployment under a siloed approach versus a standards-aligned stack:

{{component:comparison-placeholder}}

Cross-Industry Deployments Shortening Time-to-Value

The practical impact of these standards is visible in deployment timelines across different substrates.

Metal fabrication: In high-throughput stamping and welding environments, AI vision systems integrated natively with PLCs over OPC UA9AI vision systems integrated natively with PLCs over OPC UA have achieved inspection-to-rejection latencies under 15 milliseconds, with false-reject rates reduced from 3% to 0.1% in documented deployments. Because the OPC UA information model is standardized, adding a new inspection station requires configuring the UNS topic - not rebuilding the integration. Shops report deployment timelines compressing from several months to weeks when reusable templates are in place.

Plastics and polymer processing: Surface defect detection in injection-molded parts presents a different challenge: high part-to-part variation in texture and color under variable lighting. AI-based systems address this10AI-based systems address this because deep learning models learn acceptable variation rather than relying on fixed brightness or contrast thresholds. AI inspection systems achieve 99%+ defect detection rates on defects that human inspectors catch only 80% of the time, according to overview.ai2Traditional approaches. Where MQTT-based telemetry links vision outputs to process parameters - mold temperature, injection pressure, cycle time - cloud ML models can correlate defect patterns with upstream conditions, enabling scrap reductions of 15-20%4iFactory in documented installations.

Consumer goods assembly: Multi-SKU assembly lines face constant changeover pressure. AI-driven AOI systems can be deployed across multiple production lines with minimal incremental cost11AI-driven AOI systems can be implemented across multiple production lines with minimal incremental cost, enabling quality standard replication across facilities. The standardized deployment model - containerized inference models pushed to edge compute via a CI/CD pipeline, with results published over a common MQTT broker - means a consumer goods manufacturer can introduce a new SKU's inspection profile across 12 global sites in days rather than quarters.

A Five-Step Path from Pilot to Production

{{component:steps-placeholder}}

Defect Detection Consistency Across Substrates

One benefit that cuts across all three sectors is inspection consistency. AI systems apply identical quality standards across every shift, every part, every hour12AI systems apply identical quality standards across every shift, every part, every hour, eliminating the shift-to-shift interpretation drift that afflicts manual inspection. A single AI-powered camera system can inspect 3,000-10,000 parts per hour at 99%+ accuracy, compared to a trained human inspector managing 300-500 parts per hour. For multi-site manufacturers, the standardized namespace ensures defect classification rules are defined once and enforced consistently - a quality manager in a German fab shop sees the same defect taxonomy as a counterpart in a Thai assembly plant.

The traceability architecture created by combining OPC UA's digital thread with MQTT's telemetry backbone also satisfies requirements that previously demanded separate quality management system investments. Inspection images, defect classifications, part IDs, timestamps, and upstream process parameters link automatically13Inspection images, defect classifications, part IDs, timestamps, and upstream process parameters are linked automatically - creating audit-ready records for PPAP, FAI, and regulatory submissions without manual compilation.

{{widget:readiness-placeholder}}

Outlook

The convergence of OPC UA companion specifications, MQTT-based unified namespaces, and SBOM governance frameworks has removed the primary technical objections to scaling AI vision beyond the pilot. The deployment model is shifting from bespoke integration projects to infrastructure-as-code: standardized models, topic hierarchies, and AI-BOMs that can be versioned, tested, and distributed across sites.

For plant managers and quality engineers evaluating their next inspection investment, the practical question is no longer whether AI defect detection is accurate enough - AI-based systems significantly outperform traditional rule-based approaches14AI-based systems significantly outperform traditional rule-based approaches, achieving detection rates close to 100% in production environments. The question is whether the underlying data infrastructure is standards-compliant enough to make that accuracy portable across every line and every site. Shops that align to OPC UA, MQTT with UNS, and SBOM practices now are building the foundation for full-production AI inspection at speeds their competitors will struggle to match.

For a deeper look at how these principles apply specifically in high-mix metal fabrication environments, see our earlier analysis on vision-guided automation for high-mix production lines.

FAQ

What is the difference between OPC UA and MQTT in an AI inspection context? OPC UA provides semantically rich, structured data exchange between machines and control systems on the plant floor - ideal for linking vision inspection results to PLCs, MES, and SCADA. MQTT is a lightweight publish-subscribe protocol better suited to high-frequency telemetry transport from edge devices to cloud platforms. Most production-grade AI inspection architectures use both: OPC UA for in-plant communication and MQTT to carry data upward to analytics and AI inferencing layers.

Why does an SBOM matter for machine vision software? AI inspection models are not static software - they are retrained as new defect types emerge, and their behavior depends on the datasets used to train them. An SBOM (or AI-BOM) provides a machine-readable inventory of every model component: datasets, weights, training configurations, and version history. This enables vulnerability management, regulatory compliance (including EU Cyber Resilience Act obligations), and rapid response when a model component introduces risk.

How long does it realistically take to move from a pilot to full production with standardized vision systems? With a standards-compliant infrastructure in place - OPC UA endpoints, a defined MQTT unified namespace, and containerized model deployment - validated inspection configurations can be replicated to additional lines in weeks rather than months. The 6-12 week pilot phase establishes baseline metrics and a configuration template; subsequent sites benefit from that investment without re-engineering the integration.

Does AI vision inspection require replacing existing cameras? Not necessarily. Many modern AI inference platforms connect to existing GigE or USB industrial cameras through standard interfaces, adding deep learning capabilities to legacy hardware. Some platforms also offer OPC UA or MQTT connectors as software layers that sit on top of existing vision systems, avoiding full hardware replacement while still enabling standardized data sharing.

Which industries are currently seeing the fastest adoption of cross-vendor vision standards? Metal fabrication, automotive supply chain, electronics assembly, and plastics processing are among the early adopters, driven by high part volumes, tight defect tolerances, and existing Industry 4.0 infrastructure. Consumer goods manufacturers are accelerating adoption primarily due to SKU proliferation and the need to maintain consistent quality standards across distributed global production sites.