Human visual inspection misses 20-30% of defects under real production conditions, with accuracy degrading 15-25% after just two hours of continuous observation (AI Vision Inspection Guide 20261AI Vision Inspection Guide 2026). For high-mix metal fabricators running sheet, tube, and structural profiles through the same facility, that inconsistency compounds into significant scrap cost, rework delays, and customer claims. The emerging answer is not simply adding more cameras-it is fusing data from multiple sensor types and multiple AI vendors into a unified, vendor-neutral inspection pipeline.

Several U.S. mid-market fabrication shops are now piloting exactly this approach: cross-vendor AI defect detection architectures combining high-speed cameras, laser-based profilers, and tactile probes with inference engines from competing AI providers. The goal is to break vendor lock-in, standardize data inputs, and build a composable automation stack flexible enough to handle rapid part changeovers without sacrificing quality.

Why Cross-Vendor Sensor Fusion Now?

Manufacturing environments were among the earliest adopters of machine vision, and modern factory floors integrate a diverse mix of sensing modalities: cameras for visual inspection, LiDAR for spatial relationships, vibration and proximity sensors for machine state changes, and environmental IoT devices for conditions that affect quality. Historically, however, each sensor type came tethered to its own vendor software, proprietary data formats, and closed analytics layers.

Three converging factors are driving the push toward cross-vendor architectures:

- High-mix production complexity. Fabricators processing diverse metal forms need inspection systems that adapt to part geometry, surface finish, and material type without re-engineering the vision pipeline for each product family.

- Vendor lock-in costs. A typical facility runs equipment from a dozen different vendors-a Fanuc robot feeding a Siemens-controlled press, which delivers to a Cognex vision station-each speaking its own language. Engineers cobble together proprietary drivers and middleware just to share basic data. The result is brittle, expensive, and nearly impossible to scale.

- Multi-modal defect coverage. Sensor fusion-combining data from multiple sensors to produce more reliable information-yields a more robust understanding of the physics behind manufacturing processes and significantly improves product quality control. A single camera cannot catch subsurface porosity that a laser profiler or acoustic sensor reveals; combining modalities closes detection gaps.

What the Pilot Architecture Looks Like

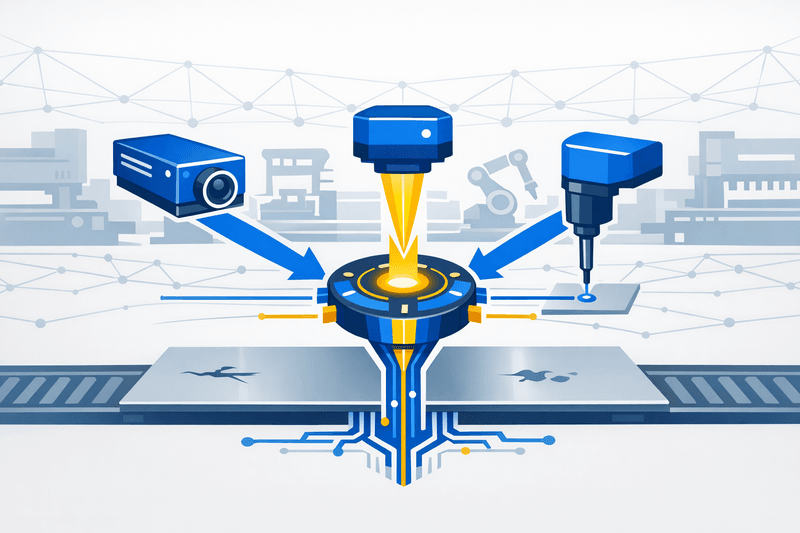

The cross-vendor pilot model emerging in U.S. fabrication shops typically layers three sensor modalities onto a shared edge-computing backbone:

- High-speed line-scan cameras capturing surface defects (scratches, dents, scale, inclusions) at production speed.

- Laser profilers or structured-light scanners measuring dimensional accuracy, warp, and cross-section geometry on tube and structural profiles.

- Tactile or force sensors providing contact-based feedback on surface roughness and forming consistency that optical methods alone may miss.

Modern defect detection systems use a combination of computer vision and machine learning techniques, each suited to different inspection challenges. Rather than relying on a single algorithm, effective systems layer multiple methods to handle variation, speed, and complexity. In the cross-vendor model, raw sensor outputs feed into a normalization layer-typically an edge gateway-where data is translated into a shared schema before reaching AI inference engines from two or more providers running in parallel.

Edge AI processing runs on NVIDIA Jetson or L4 GPU hardware with sub-100ms inference, and all data stays on-premise-a critical requirement for fabricators concerned about intellectual property and latency. The parallel-inference approach allows shops to benchmark competing AI models against the same fused sensor stream under actual production conditions, selecting the best-performing engine per defect category.

| Challenge | Single-Vendor Stack | Cross-Vendor Sensor Fusion |

|---|---|---|

| Sensor flexibility | Locked to proprietary cameras and formats | Mix cameras, laser scanners, and tactile sensors from multiple OEMs |

| Data interoperability | Native within vendor ecosystem only | Standardized via OPC UA Vision and REST/MQTT gateways |

| AI inference engine | Single vendor model | Multiple AI engines evaluated per use case |

| Scalability | Scale within one vendor's catalog | Composable stack; swap or add best-of-breed components |

| Vendor lock-in risk | High | Low-standardized perception interfaces |

| Integration complexity | Low (pre-integrated) | Moderate-requires calibration and data normalization |

The Interoperability Layer: OPC UA Vision and Emerging Standards

Data interoperability is the central technical challenge. Disparate sensors produce outputs in different formats, at different rates, with different coordinate systems. Without a normalization standard, the fused data pipeline becomes its own brittle integration project.

The VDMA OPC Vision Initiative-a joint effort of VDMA Machine Vision and the OPC Foundation-has developed an OPC UA companion specification for machine vision covering any vision system, smart camera, or vision sensor capable of extracting information from digital images on the shop floor. The OPC UA Vision companion specification (VDMA 40100) provides a standardized information model for machine vision systems and is available free of charge (OPC Foundation2OPC Foundation).

The specification's scope extends beyond replacing existing interfaces. It creates horizontal and vertical integration pathways to communicate relevant data to each authorized process participant, from machine PLC to line PLC to enterprise IT systems. For cross-vendor pilots, this means a laser profiler from one OEM and a camera system from another can both publish inspection results in a common information model that the AI inference layer consumes without custom drivers.

In mechanical engineering alone, more than 40 working groups have created OPC UA information models as companion specifications, and the ecosystem continues to expand. The 3rd Interoperability Summit is scheduled for May 7, 2026, in Bonn, Germany, co-hosted by ECLASS, GS1 Germany, and VDMA Machine Information Interoperability (VDMA3VDMA)-a signal that standards bodies are accelerating harmonization efforts relevant to fabricators building multi-vendor stacks.

Fabricators that have already adopted vision-guided automation in high-mix environments are well positioned to extend those systems with cross-vendor sensor fusion by adding an OPC UA gateway layer to existing camera infrastructure.

Early ROI Signals From Pilot Programs

The financial case for AI-driven inspection is well documented in single-vendor deployments. AI vision inspection systems achieve 95-99% detection accuracy, inspect 10,000+ parts per hour at sub-100ms inference speed, and have demonstrated 37% defect reduction with 374% three-year ROI and 7-8 month average payback (iFactory 2026 Guide1AI Vision Inspection Guide 2026). Cross-vendor pilots are now tracking whether the multi-modal, multi-engine approach can push those numbers further while reducing total cost of ownership.

Early indicators point to benefits across several dimensions:

- Scrap reduction. Fusing optical and dimensional data catches defects that either modality alone would miss. Fabricators report catching forming-related dimensional drift on tube profiles that camera-only systems classified as pass.

- Shorter rework cycles. Automated quality control moves beyond simple defect detection to contextual analysis correlating product anomalies with process variables, enabling faster root-cause identification.

- Process stability. Continuous multi-sensor monitoring creates a richer baseline for statistical process control, flagging drift earlier. Predictive maintenance benefits from sensor fusion that aggregates visual and non-visual indicators to identify degradation patterns sooner than any single signal would allow.

- Extended KPI tracking. Beyond defect rate, pilots measure throughput impact, energy consumption per inspected unit, and maintenance interval extensions-metrics that strengthen the business case for full-plant rollout.

Manufacturers deploying AI inspection typically see ROI within 6-12 months through labor savings, reduced scrap, fewer customer returns, and faster throughput4Overview.ai.

Staged Rollout Guidance for Fabricators

For fabricators considering cross-vendor AI defect detection, a disciplined staged approach minimizes risk and produces the verified data needed to justify expansion.

Step 1: Audit existing sensor and vision assets. Catalog all cameras, laser scanners, tactile probes, and inspection stations. Document data formats, communication protocols, and current integration gaps.

Step 2: Select a high-impact pilot line. Choose a line running mixed material forms with measurable quality pain points-scrap rate above 3%, frequent rework, or customer claim history.

Step 3: Deploy an OPC UA gateway layer. Install edge gateways that normalize sensor outputs into a common data model. Use OPC UA Vision (VDMA 40100) as the baseline interface specification.

Step 4: Integrate multi-vendor AI inference engines. Run parallel inference from at least two AI vendors against the fused sensor data. Compare detection accuracy, latency, and false-positive rates under actual production conditions.

Step 5: Measure pilot KPIs for 90-120 days. Track defect rate, scrap cost, rework cycles, throughput, energy use, and maintenance intervals. Build the business case with plant-verified data-not vendor projections-before scaling.

Governance for Multi-Vendor Collaboration

A cross-vendor stack requires clear governance:

- Data ownership agreements defining who owns the fused data, model training outputs, and derived insights.

- SLA frameworks for each vendor's inference engine, specifying accuracy thresholds, latency ceilings, and uptime requirements.

- Change management protocols ensuring that AI model updates from one vendor do not degrade the fused pipeline's overall performance.

Outlook

Cross-vendor AI defect detection represents a structural shift in how metal fabricators approach quality control. Rather than buying into a single vendor's end-to-end ecosystem, the composable automation model lets shops assemble best-of-breed sensors and AI engines-then swap components as technology evolves. The AI-based visual inspection market reached $1.62 billion in 2024 and is growing at 13.8% CAGR (iFactory1AI Vision Inspection Guide 2026), and the cross-vendor segment is positioned to capture an increasing share as interoperability standards mature.

For fabricators running high-mix operations across sheet, tube, and structural profiles, the path forward is clear: start with a single pilot line, lean on OPC UA as the interoperability backbone, measure rigorously for 90-120 days, and let plant-verified data drive the expansion decision.

Frequently Asked Questions

What does "cross-vendor" mean in the context of AI defect detection? It refers to an inspection architecture where sensors, cameras, and AI inference engines from multiple manufacturers are integrated into a single quality-control pipeline, rather than relying on one vendor's proprietary ecosystem.

What is sensor fusion in metal fabrication? Sensor fusion combines real-time data streams from different inspection modalities-such as high-speed cameras, laser profilers, and tactile probes-into a unified perception layer. This allows AI models to detect a wider range of defects more reliably than any single sensor alone.

How does OPC UA support cross-vendor machine vision? The OPC UA Machine Vision Companion Specification (VDMA 40100) provides a standardized information model and communication interface that allows vision systems from different vendors to exchange data with PLCs, MES, and other factory systems without proprietary drivers.

What ROI can fabricators expect from a pilot? Early pilots report measurable scrap reductions, shortened rework cycles, and improved process stability within 90-120 days. Industry data suggests AI inspection systems can achieve ROI within 6-12 months through labor redeployment, scrap savings, and fewer customer returns.

Is cross-vendor sensor fusion practical for mid-market fabricators? Yes, but it requires deliberate planning. Starting with an OPC UA gateway layer and a single pilot line keeps integration costs manageable while producing the verified data needed to justify broader rollout.