A growing cohort of U.S. metal fabrication facilities is advancing AI-powered defect detection from controlled pilot programs into full production deployment, leveraging cross-vendor sensor fusion architectures to achieve real-time quality control across forming, welding, and finishing operations. The transition marks a measurable shift in how the domestic fabrication sector approaches quality assurance and capital expenditure justification, as clearer return-on-investment data now supports broader rollout decisions.

Background

For decades, manual visual inspection has served as the primary quality gate on metal fabrication lines. Human inspectors at the end of a rolling mill catch 60-70% of surface defects on a good day; on a night shift after eight hours, that figure drops to 40-50%. The consequences are commercially significant: surface quality defects drive 2-5% of total steel production to secondary or reject status, costing $3 million to $12 million annually in downgrade losses alone-before accounting for customer claims, sorting costs, and lost business.

Rule-based machine vision systems offered a partial remedy but imposed their own limitations. Pixel thresholds and edge detection algorithms fail on real metal surfaces, where reflections shift with every coil, scale patterns vary with chemistry, and acceptable cosmetic variation overlaps with true defect signatures. Deep learning AI learns the difference from large training datasets. That architectural gap is now driving adoption of deep-learning-based systems at scale.

Technical Architecture and Integration

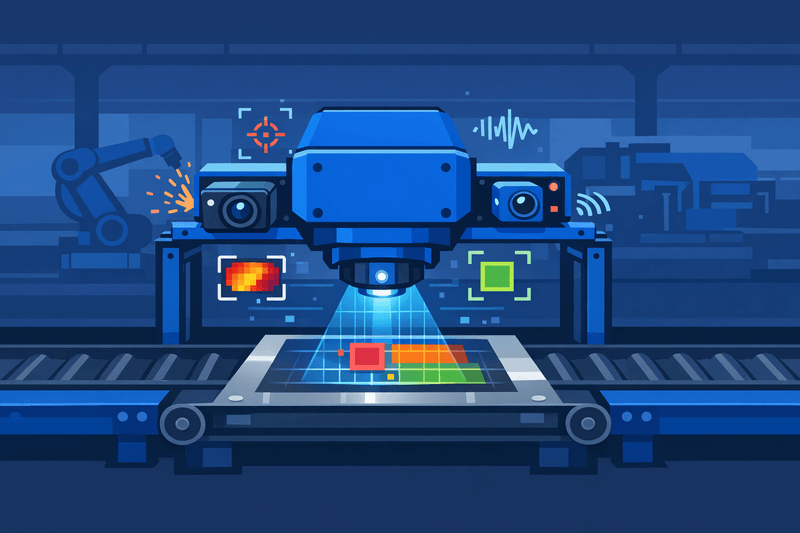

Production deployments are coalescing around edge AI inference hardware paired with multi-modal sensing stacks. Edge AI welding inspection shifts inference to the production floor. Cameras, thermal sensors, and acoustic systems feed directly into dedicated computing hardware-no cloud dependency, no bandwidth bottlenecks, no latency compromise. The result is sub-50 ms response times, local data sovereignty, and continuous operation even when network connectivity fails.

For high-speed production lines, latency is not a minor concern. At 2 meters per minute, a 200-millisecond round-trip to the cloud means a defect has already traveled 6.7 mm before the alert fires-a gap that defines scrap versus salvage in high-speed TIG or laser welding.

Cross-vendor interoperability relies on standardized communication protocols. Inference outputs feed into programmable logic controllers (PLCs) or industrial PCs running welding cell automation, with integration patterns including binary pass/fail signals via hardwired digital I/O, analog quality score outputs, and structured data via OPC UA, MQTT, or REST APIs to MES and quality systems.

AI vision systems now detect and classify over 200 types of metal surface defects at full production speed-up to 2,000 m/min-with 95-99% accuracy and a minimum detectable defect size of 0.1 mm, inspecting 100% of surface area on both sides simultaneously.

Multi-modal AI systems analyze thermal patterns, vibration signatures, and pressure readings alongside visual data. This sensor fusion approach catches defects that pure visual inspection misses, including internal flaws, material stress, and component degradation.

Compliance requirements add a further layer of integration complexity. ISO 3834 quality requirements for fusion welding mandate digital records supporting materials traceability, procedure qualification, and personnel certification. EN 1090 execution class verification depends on automated welding parameter monitoring and documentation. IEC 61508 and ISO 13849 impose functional safety evaluation for systems providing safety-related feedback to welding controllers.

ROI Signals and Production-Scale Challenges

Financial returns from production deployments are now more precisely quantifiable than at the pilot stage. Forrester research documents a 374% average three-year ROI with a 7-8 month average payback period for AI vision inspection systems. Per-line savings average $691,200 annually in labor costs alone-before counting scrap reduction of $500,000 or more, fewer warranty claims of $1-2 million, and a 35% throughput increase.

Steel manufacturers implementing AI vision inspection achieve scrap reduction of approximately 37%, with most facilities reaching complete ROI within 14 months through combined savings in labor, scrap, rework, and warranty costs. Human visual inspection misses 20-30% of defects under real production conditions, with accuracy degrading 15-25% after just two hours of continuous observation, while inter-inspector agreement on defect severity reaches only 55-70%.

Scaling beyond the pilot, however, introduces operational challenges distinct from those encountered during controlled trials. Data labeling at production volume requires structured annotation workflows and consistent defect taxonomy across shifts and facilities. Model lifecycle management-including retraining cadence, version control, and performance drift monitoring-demands dedicated engineering resources that many mid-sized fabricators are still building. When novel defects appear, AI systems flag them for review and retrain on new data, ensuring continuous improvement rather than obsolescence.

Deployment cost for comprehensive coverage remains substantial. A comprehensive AI surface inspection deployment covering hot strip, cold mill, and coating line runs $2 million to $5 million, with most mills achieving payback within 12-18 months from downgrade reduction alone.

Outlook

The broader sensor fusion market underpins the long-term trajectory of these deployments. The global sensor fusion market is valued between $9 billion and $11 billion in 2025, with projections reaching $56 billion by 2034-reflecting broad industry recognition that single-sensor approaches are nearing their practical limits. For U.S. metal fabricators, the near-term focus centers on standardizing model governance frameworks, addressing regulatory documentation requirements for safety-critical weld joints, and resolving cross-vendor PLC and MES integration as multi-site rollouts progress.