Most metalworking facilities have accumulated a patchwork of machine tools, controllers, and inspection cells from different vendors, each running proprietary logic. Integrating them into a coherent, AI-capable production system has historically required years of engineering work and millions in custom middleware. Software-defined automation (SDA) is changing that calculus - and according to IDC, by 2029 30% of factories will configure and manage control systems centrally using open, virtualized, software-defined automation platforms. For high-mix fabrication shops, where product variety has long blocked viable automation economics, the architectural shift underway may be the most consequential development of the decade.

What Software-Defined Automation Actually Means

Traditional industrial automation embeds control logic inside dedicated hardware: PLCs, proprietary DCS units, and fixed-function controllers wired directly to machines. Changing a production line means physical rewiring. Adding an AI module means deploying a separate hardware layer. SDA separates control logic from the physical machines entirely, moving it to virtualized platforms running on servers, thin clients, or edge nodes.

This approach lets manufacturers reconfigure production systems rapidly and at lower cost, integrating emerging technologies such as AI, edge computing, and digital twins without replacing hardware.

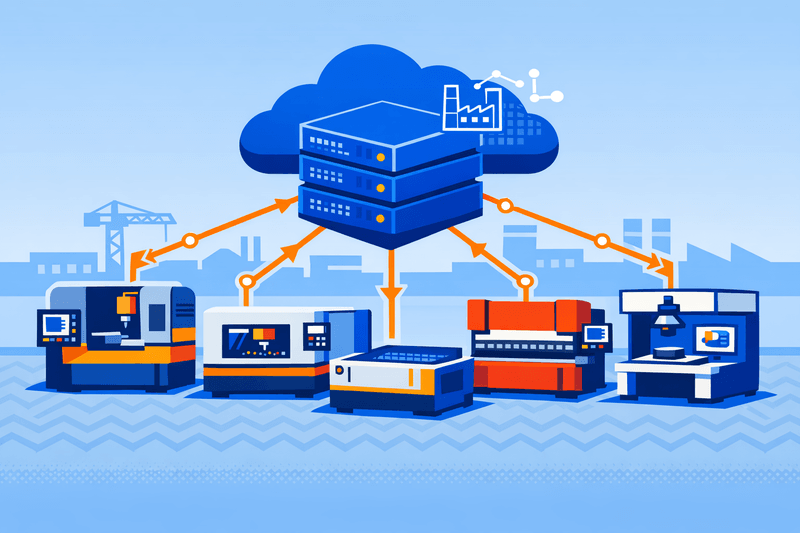

The practical implication for a metal fabrication floor: a CNC machining center, a laser cutting cell, a press brake, and a vision inspection station - each from a different OEM - can share a unified software control plane. Changeover logic, quality thresholds, and maintenance schedules update via software push rather than a field service visit.

Interoperability: The Core Engineering Problem SDA Solves

The largest friction point in industrial AI deployment is not the AI itself - it is getting heterogeneous equipment to share data in a common language. Interoperability enables AI workloads to move between devices and across vendors, while open interfaces allow companies to mix and match hardware without rewriting code.

SDA addresses this through standardized APIs and common data models built on open protocols. Communication and orchestration standards such as MQTT, OPC UA, DDS, and Kubernetes-based edge extensions allow systems to share data securely and manage workloads consistently. For model portability, successful edge AI deployments rely on open-source software, frameworks, and open hardware architectures working together across every layer of the stack. Open frameworks like ONNX, TensorFlow Lite, and PyTorch Mobile/Edge enable developers to deploy models across multiple hardware designs - a single model can be optimized once and reused repeatedly, reducing costs and accelerating time to deployment.

For procurement managers evaluating vendors, this translates directly into leverage: requiring OPC UA compliance and open API documentation as procurement conditions is now a technically defensible baseline.

The Architecture: Four Layers in Practice

A functional SDA stack for a high-mix metalworking environment operates across four layers:

- Shop floor (physical layer): CNC machines, laser cutters, press brakes, robotic welding cells, and vision inspection cameras. These remain as-installed, with hardware adapters or edge gateways bridging legacy protocols.

- Edge layer: Virtualized PLCs, OPC UA data brokers, and local AI inference engines. Integrated NPUs now enable edge analytics - a clear shift toward embedding NPUs directly into IPCs and controllers for real-time inference without cloud latency or bandwidth costs. This fills the performance and cost gap between CPU-only analytics at the low end and GPU-based workloads at the high end.

- Orchestration layer: AI model manager, predictive maintenance engine, line reconfiguration scheduler, and digital twin synchronizer. Production logic lives here, and AI-driven decisions execute at this level.

- Enterprise/cloud layer: MES integration, ERP data feeds, cybersecurity dashboards, and multi-site deployment management. By 2027, IDC projects that 40% of all operational data will be integrated across applications and platforms autonomously, driven by increased standardization and AI agents purpose-built for specific data.1Why Openness Matters For AI At The Edge

Real-world precedent: Audi set out to virtualize shop-floor automation to accelerate production, increase robustness, and enhance flexibility. Deploying new features without installing new hardware enables Audi to scale faster and maximize availability. Siemens delivered this with the SIMATIC S7-1500V virtual PLC - the first TÜV-certified virtual fail-safe control system - hosted in a remote data center while its functions deploy on the shop floor using Industrial Edge as the infrastructure.

For metalworking operations, the lesson is clear: even safety-certified control functions can now run in virtualized environments, removing the last hardware-lock argument against SDA adoption.

Cybersecurity: The Expanded Attack Surface

High-mix manufacturing environments are pushing conventional compute architectures to their limits, with rigid hardware-software integration slowing innovation at the edge. Decoupling control logic from hardware also expands the attack surface in ways that air-gapped PLC environments never faced.

Hardware must support AI workloads on devices with limited capacity, distributed resources need efficient management, and interoperability across varied edge environments must be guaranteed. Security measures are critical, as edge devices often operate under less controlled conditions than centralized servers.

The response from infrastructure vendors has been architectural. Multi-layered, zero-trust security protects AI environments at every level. Tamper-proof features, deep telemetry, consistent policies, and drift-free configurations help ensure resilience, while audit trails safeguard compliance as operations scale. Security is built in at the device level and extends through zero-trust access controls, network segmentation, and application and AI model protection. This approach addresses the expanded attack surface at the edge, helping secure AI operations from both physical and cyber threats.

Software Bill of Materials (SBOM) as an OT Security Baseline

A less-discussed but increasingly critical security tool for SDA environments is the Software Bill of Materials. SBOMs provide a detailed inventory of software components, enabling organizations to identify vulnerabilities, assess risk, and make informed decisions about the software they deploy.

⚠ SBOM Requirements Are Coming to Industrial OT

CISA, in collaboration with NSA and 19 international partners, released joint guidance outlining A Shared Vision of Software Bill of Materials for Cybersecurity - a significant step toward strengthening software supply chain transparency and security worldwide. The European Union is implementing the Cyber Resilience Act (CRA), which will include an SBOM requirement and take effect in early 2027. Plant managers and procurement teams sourcing SDA components should begin requiring machine-readable SBOMs from automation vendors now.

Risk remediation accelerates when companies leverage the information in their SBOM inventory. Schneider Electric's SBOMs, for example, proved instrumental during the Log4j and OpenSSL vulnerability events, helping the company quickly identify potential risks and release customer security advisories promptly. In a fabrication environment, where a single exploited edge node could halt an entire line, that speed of response is operationally significant.

ROI Model: Modularity Over Monolithic Investment

The economics of SDA differ fundamentally from traditional automation capital plans. Rather than a large, line-specific CapEx commitment that takes two to three years to prove out, SDA enables incremental investment: deploy virtualized control on one cell, validate performance, then extend to adjacent cells using the same software stack.

This shift also helps bridge the global shortage of automation talent. Traditional OT systems require years of specialized training, but SDA makes automation more accessible to a broader range of engineers and software specialists. It allows new generations - including those with IT or cloud backgrounds - to contribute without learning decades of vendor-specific hardware.

For ROI modeling, the key metrics to track differ from hardware-centric deployments:

- Reconfiguration cost per changeover (target: near-zero vs. hours of engineering time)

- Predictive maintenance uplift - reduction in unplanned downtime compared to scheduled-maintenance baseline

- Time-to-deploy AI model updates across a multi-machine line

- Multi-site replication cost - deploying the same control configuration to a second facility without re-engineering

Software-defined automation for products, assets, and facilities is becoming mission critical, with low-code development tools playing an increasingly important role in improving data flow, analytics, and collaboration. Organizations need a rapid, streamlined way to update and create software that enhances data movement, analytics, ecosystem collaboration, and overall operations.

SDA vs. Traditional Automation: A Structural Comparison

| Attribute | Traditional Automation | Software-Defined Automation |

|---|---|---|

| Control logic location | Embedded in hardware PLCs | Virtualized - servers, edge nodes, or cloud |

| Reconfiguration method | Physical rewiring or hardware swap | Software update via API |

| Vendor interoperability | Proprietary, closed protocols | Open APIs, OPC UA, standardized data models |

| AI/ML integration | Requires separate hardware layers | Native - models deploy directly to control plane |

| Predictive maintenance | Reactive or schedule-based | Continuous, AI-driven anomaly detection at edge |

| Cybersecurity posture | Air-gapped, limited visibility | Zero-trust, SBOM-based supply-chain transparency |

| Capital investment model | Large upfront CapEx per line | Incremental, modular - pay-as-you-scale |

| Multi-site replication | Manual re-engineering per facility | Centralized config deployed across all facilities |

| Workforce skill profile | OT/PLC specialists | IT/OT hybrid - software engineers + OT context |

Workforce Implications: New Roles on the Shop Floor

SDA does not eliminate the need for OT expertise - it recombines it with software engineering competencies that most fabrication shops have never recruited for. Engineers gain faster iteration and stronger validation, operations teams achieve more reliable and scalable deployments, and executives gain a clearer model for rolling out automation programs across multiple sites with consistent performance.

The roles emerging in SDA-equipped facilities include:

- Edge DevOps engineers: Deploy, monitor, and update containerized control applications across distributed edge nodes.

- AI model governance leads: Certify that inference models meet performance and safety thresholds before production deployment. This function has no direct equivalent in traditional OT.

- IT/OT integration architects: Design data pathways between shop-floor equipment, edge compute, MES, and ERP systems.

- Cybersecurity analysts (OT-focused): Manage identity certificates for edge devices, review SBOMs for newly disclosed CVEs, and maintain zero-trust policy enforcement.

The long-standing divide between IT and operational technology will continue to dissolve. Manufacturers are increasingly applying IT workflows, containerization, and modern management tools directly to factory-floor environments. This convergence enables more consistent infrastructure strategies, bringing cybersecurity, automation, and lifecycle management into industrial operations in a unified way.

Facilities that invest in cross-training existing technicians through structured IT/OT upskilling programs - rather than waiting to hire purpose-built profiles - will compress their readiness timeline.

Standards Momentum and Industry Collaboration

The standards landscape is consolidating quickly. In March 2025, Accenture and Siemens launched the Accenture Siemens Business Group, dedicating a 7,000-person team to building software-defined products and factories with deep AI integration. Major automation vendors - including Schneider Electric, Rockwell Automation, ABB, and Emerson - have all publicly committed to software-defined and open-architecture roadmaps.

NIST is investing $20 million to establish two centers, including an AI Economic Security Center for U.S. Manufacturing Productivity, to advance AI-based technology solutions and strengthen manufacturing competitiveness. In parallel, NIST is planning to announce its award for the AI for Resilient Manufacturing Institute, backed by up to $70 million over five years from NIST and matching non-federal funding.

At the protocol level, the Open Process Automation Forum (OPAF), OPC UA working groups, and IEC technical committees are actively harmonizing data schemas, interface specifications, and semantic models to reduce integration friction as deployments scale across multi-facility supply networks.

Takeaways for Fabrication Operations

The transition to software-defined automation is not a single project - it is an architectural posture that determines how quickly a facility can absorb new AI capabilities, respond to product mix changes, and replicate improvements across sites.

For plant managers and process engineers evaluating SDA adoption:

- Start with the data layer. Deploying OPC UA-compliant gateways across existing equipment is a lower-risk first step that unlocks AI analytics without changing control logic.

- Require SBOMs in vendor contracts today. The regulatory environment is converging on this requirement. Early adoption builds institutional muscle before compliance deadlines arrive.

- Pilot predictive maintenance on one high-value machine. This demonstrates the edge AI ROI model with minimal integration risk and builds the organizational confidence needed for broader deployment.

- Define model governance procedures before deploying AI to control. Establishing validation and rollback protocols for AI models is a safety and operational continuity requirement, not an afterthought.

Shops already advancing vision-guided automation on the machine cell level now have an architecture that turns isolated AI applications into a coordinated, software-managed production network - one that reconfigures at software speed rather than hardware lead times.

FAQ

What is the difference between software-defined automation and traditional industrial automation?

Traditional automation embeds control logic in dedicated hardware PLCs and proprietary controllers. SDA decouples that logic from hardware, running it on virtualized platforms - servers, edge nodes, or cloud infrastructure - that can be updated, reconfigured, or replicated through software changes alone. This eliminates the physical rewiring required when changing product lines or integrating new equipment.

Is SDA suitable for high-mix, low-volume metal fabrication environments?

Yes - and it is arguably better suited to high-mix environments than to high-volume lines. Because product changeovers can be handled through software reconfiguration rather than hardware changes, SDA dramatically reduces the setup overhead that historically made automation uneconomical in low-volume shops. Standardized APIs and open data models allow diverse machine types - CNC machining centers, press brakes, laser cutters, vision inspection cells - to participate in a unified control and data fabric.

What cybersecurity risks does SDA introduce compared to legacy OT systems?

Moving control logic into software and connecting it to networks expands the attack surface considerably relative to air-gapped, hardware-bound PLCs. Key risks include unauthorized access to virtualized control planes, exploitation of software dependencies in third-party components, and manipulation of AI model inputs. Mitigations include zero-trust network segmentation, cryptographic identity management for edge devices, and SBOM visibility to track component provenance and respond rapidly to disclosed vulnerabilities.

What standards govern interoperability in software-defined manufacturing?

OPC UA (IEC 62541) is the dominant protocol for secure, semantic data exchange between industrial devices and software layers. For AI model portability, open frameworks such as ONNX, TensorFlow Lite, and MQTT are widely adopted at the edge. Orchestration standards like Kubernetes-based edge extensions support consistent workload management across distributed nodes.

How should manufacturers approach the SDA workforce transition?

The transition requires developing a hybrid IT/OT skill profile that did not previously exist on most shop floors. Existing OT technicians can be upskilled through structured programs covering containerized application deployment, API integration, and AI model governance. Hiring software engineers with industrial domain onboarding provides a faster bridge. Model validation engineering - certifying AI model performance before production deployment - represents an entirely new job category that forward-looking facilities should define and staff now.