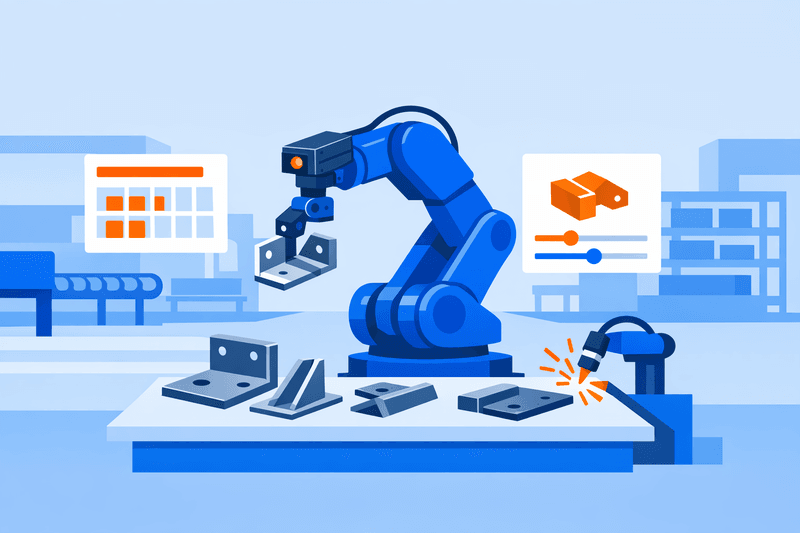

Vision-guided automation is rapidly moving from specialized applications to a primary role in high-mix metal fabrication. For plants managing frequent changeovers, diverse weldments, and short production runs, the integration of industrial robotics with machine vision is redefining the boundaries of automation. This analysis examines how vision-enabled robots are reshaping workflows across the automotive, aerospace, and manufacturing sectors, with implications for standards, workforce competencies, and investment planning.

The Bigger Picture: From Fixed Cells to Vision-Guided Robotics

In the past decade, industrial robotics has evolved from rigid, high-volume cells toward adaptable, software-driven automation.

There were about 4.66 million operational industrial robots in factories worldwide by the end of 2024, more than double the installed base a decade earlier. This growth indicates not only broader automation adoption but also diversification beyond traditional automotive body-in-white lines.

The International Federation of Robotics reports that 542,000 new industrial robots were installed globally in 2024, matching the previous year's record level. While automotive and electronics dominate, metal and machinery applications now account for roughly 10% of the global operational industrial robot stock, signaling wider use in cutting, welding, machining, and materials handling.

In metalworking, the trend is moving from single-purpose welding cells to flexible manufacturing, where:

- Robots shift between product families.

- Programs are updated in hours or minutes instead of weeks.

- Machine vision manages part variation, orientation, and in-process measurement.

This shift is most visible in high-mix, low-volume (HMLV) settings, where traditional hard automation often lacked economic justification.

Why High-Mix Metal Fabrication Needs a Different Automation Playbook

High-mix metal fabrication-common in job shops, aerospace parts, specialty vehicle structures, and industrial equipment-faces unique challenges:

- Frequent changeovers: Dozens or hundreds of part numbers monthly, varying in geometry, material, and weld process.

- Short runs: Batch sizes from prototypes to hundreds, making long setups uneconomical.

- Variable fixturing: Changing fixtures, clamps, and tack welds affect seam locations and tolerances.

- Strict quality demands: Industries like aerospace, medical, and energy require traceability and consistent weld or cut quality.

Industry analysis confirms that rising demand for high-mix, low-volume parts in medical, aerospace, and automotive sectors is a key driver for flexible manufacturing systems over fixed automation High-mix, low-volume components have been identified as a key driver for the adoption of flexible manufacturing systems, especially in sectors such as medical, aerospace, and automotive.

As a result, HMLV fabricators require automation solutions that:

- Accommodate geometric and fixture variability.

- Enable fast changeovers without significant manual programming.

- Integrate with planning systems for dynamic sequencing.

Vision-guided robotics addresses these core requirements.

What Vision-Guided Automation Changes on the Shop Floor

Machine vision allows robots to adapt to real part conditions, impacting changeover efficiency, quality control, and production scheduling in high-mix metalwork.

Shorter Changeovers and More Dynamic Scheduling

Traditional robotic fabrication cells typically rely on:

- Dedicated fixtures for repeatable positioning.

- Manual programming and offline adjustment for every part.

- PLC- or controller-specific changeover steps.

Vision-guided systems enable:

- Automatic part localization: Cameras determine part position and orientation; robots compensate in real time.

- Feature-based alignment: Vision systems locate reference points, edges, or weld seams and adjust robot paths accordingly.

- Recipe-driven changeovers: Operators select product families; the system loads the corresponding detection patterns.

Examples from pick-and-place and machining illustrate changeovers reduced to about one minute when vision and recipe selection are unified-enabling hundreds of part types in a single cell. This replaces multi-step manual programming across robot and camera interfaces.

A recent EIT Manufacturing report highlights a software platform used in metalworking, machining, and assembly. The platform enables autonomous, collision-free trajectory planning and rapid reconfiguration of robotic cells, supporting flexible automation in high-mix, low-volume environments with frequent product or layout changes. Such platforms, combined with vision, underpin dynamic scheduling, allowing real-time prioritization and robot reconfiguration driven by digital work orders.

This approach shifts robots from single-task assets to shared production resources managed through digital workflows.

Robotic Welding in High-Mix Environments

Robotic welding, once reserved for repetitive, high-volume production, now benefits from vision systems that overcome batch variability.

Emerging trends include:

- Laser and camera-based seam tracking: Cameras near the torch detect joint location and orientation, addressing distortion, gap changes, and fixture tolerances.

- Automatic path generation: AI-enhanced software creates weld paths from 3D scans or CAD, reducing manual teaching for new variants.

- In-process quality monitoring: Sensors and vision measure bead appearance and penetration, adjusting parameters in real time.

Academic research shows a 12.3% reduction in weld defects and a 9.8% increase in welding effectiveness after optimizing a laser-vision-guided robotic process. Commercial data confirms that vision-guided welding achieves near-perfect seam accuracy, minimizing rework.

Shop floor developments include:

- AI product recognition: Systems use AI to recognize weldment geometries, prompting best-practice weld parameters and enabling autonomous welding in HMLV cells.

- Low- or no-programming solutions: Vision and AI detect components and generate weld trajectories automatically, letting operators set intent rather than program paths; multiple robots are operational in continuous HMLV production.

- Focus on high-mix job shops: Vendors show that AI and vision reduce the need for offline programming, enabling automation for work previously handled by skilled manual welders.

These capabilities reposition robotic welding from fixed, part-specific use to a flexible tool for:

- Frequent design changes in structural frames.

- Small-batch spare parts.

- Complex welds requiring precise repeatability.

Machine Vision for Inspection and Adaptive Quality Control

Inspection is a significant area of impact for vision-guided automation in high-mix settings.

For example, in aerospace manufacturing:

- Nearly 50 AI-enabled cobots with integrated vision were deployed.

- Automated 10 complex applications, spanning inspection and part handling.

- Saved more than 80,000 production hours by reducing manual tasks and changeover overhead in a metal precision plant.

AI vision enables flexible production shifts and automated optical inspection (AOI), detecting features like screw presence, punched holes, and dimensional compliance.

Research also explores defect detection for non-convex cylindrical parts, where reflections and geometry impede traditional vision. Domain-adaptive models now maintain accuracy across various metal parts and lighting, reducing re-engineering needs for each new variant.

For plants, this results in:

- Faster inspection program creation for new parts.

- More consistent detection than manual inspection.

- Data-driven defect logs for process improvement.

Cross-Industry Adoption: Automotive, Aerospace, and General Manufacturing

Vision-guided automation's adoption patterns differ by sector.

Comparative View by Sector

| Sector | Product mix & volume | Main robotic tasks in metalworking | Role of machine vision | Key drivers |

|---|---|---|---|---|

| Automotive | Medium/high volume; more model variants | Spot/arc welding, materials handling, fasteners | Part localization, seam/gap tracking, in-line inspection | Variant complexity, traceability, EV programs |

| Aerospace | High-mix; low/medium-volume structures and engines | Drilling, fastening, milling, welding | Precision alignment, hole verification, defect inspection | Labor, precision, rework, MRO workloads |

| General & job shops | HMLV parts, batches of 1-thousands | CNC tending, cutting, welding, deburring, inspection | Feature recognition, flexible loading, adaptive QC | Labor shortage, lead times, margin pressure |

Examples include:

- Automotive and suppliers: Automation extends from body-in-white lines to flexible subassemblies, where frequent changes demand vision-enabled robotics.

- Aerospace: Precision robots are closing the gap with machine tools, achieving tolerances to 0.1 mm for large structures. In MRO, vision-guided robots automate drilling, defastening, and inspection, reducing manual labor and improving consistency.

- Job shops: Cobots and vision systems are widely used for CNC tending and inspection, even in small-batch settings, enabling unmanned operations and lowering costs.

Decision-makers should note that vision-guided automation is accessible to medium-size shops, not just flagship OEM plants, as core technologies like vision localization and standardized data interfaces become widely available.

Data Interfaces and Interoperability: The Hidden Enabler

Vision-guided automation must integrate with the wider manufacturing stack: CNCs, welding sources, MES/ERP, and quality systems. Here, standardization is crucial.

OPC UA and Machine Vision Standards

OPC UA has emerged as the leading interoperability protocol for secure, reliable industrial data. In 2019, a global working group led by VDMA and the OPC Foundation released the first unified information model for machine vision:

- The OPC UA Machine Vision Part 1 Companion Specification defines a standardized, abstract information model for vision systems-effectively a "digital twin" for interoperable communication across platforms.

- Developed by stakeholders from North America, Europe, China, and Japan, this approach harmonizes machine vision protocols internationally.

Currently, VDMA manages about 40 OPC UA working groups covering machine tools, robotics, plastics, metrology, and more. Programs like umati support widespread implementation, promoting easy integration across vendors.

For metal fabrication, these standards offer:

- Consistent device models: Uniform data structures for robots, vision, and welding systems, simplifying integration.

- Easier MES connectivity: Process data is accessible to higher-level systems without custom drivers.

- Vendor flexibility: Plants can combine solutions from different suppliers if they follow shared specifications.

Adoption pace varies, but standardized data interfaces are central to future-ready vision-guided cells, replacing fragmented, proprietary connections.

Workforce and Role Changes in Vision-Guided Cells

Vision-guided automation shifts, rather than eliminates, skill requirements.

One aerospace manufacturer using dozens of AI-enabled cobots reports freed production hours and redeployment of staff from hazardous manual tasks to higher-value technical and management roles.

Skills and Training Priorities

Important capability areas include:

- Cell configuration: Operators manage recipe selection, path verification, and exceptions, rather than programming all robot paths manually.

- Vision system expertise: Camera calibration, lighting, lens choice, and AI model training are vital for part variation robustness.

- Process knowledge: Human experts define parameter ranges, quality benchmarks, and corrective responses in welding and cutting.

- Data literacy: Engineers interpret logs, defect reports, and dashboards to refine planning and process settings.

- Safety and collaboration: As cobots and shared spaces proliferate, knowledge of collaborative safety standards and risk assessment becomes critical.

Operator roles are evolving into "cell supervisor" or "production technologist," responsible for:

- Approving new products for automation.

- Monitoring KPIs such as yield and OEE.

- Coordinating maintenance and IT/OT updates.

Investment and Implementation Considerations

For high-mix fabricators exploring vision-guided automation, key factors include:

1. Process Selection and Phasing

Vision guidance is most valuable where:

- Manual effort and ergonomic risks are high.

- Product mix or geometry is variable.

- Quality or traceability requirements are strict.

Common starting points:

- CNC tending for diverse part families

- Welding repeatable subassemblies with variable placement

- Visual inspection of key features

A staged approach-starting with pilots, then expanding-helps manage risk and build expertise.

2. Tooling and Fixturing Strategy

Vision minimizes, but does not eliminate, fixture needs. Effective practice includes:

- Designing fixtures with identifiable reference features.

- Balancing adjustability for variants with rigidity for precision.

- Using vision compensation alongside sound fixturing; avoid over-reliance on either.

3. Digital Infrastructure and Standards Alignment

Scalable adoption depends on:

- OPC UA or equivalent standardized protocols.

- MES/ERP strategies to collect data from robots and vision systems.

- Robust networking for real-time and secure remote access.

Equipment aligned with companion specifications simplifies long-term integration.

4. Total Cost of Ownership and Changeover Economics

ROI calculations previously focused on labor savings. In high-mix settings, benefits also include:

- Reduced changeover time and increased uptime.

- Lower rework and scrap rates from better inspection.

- Flexibility for short runs and prototype work.

U.S. robot installations grew 8% in metal processing and machinery manufacturing in 2023, reaching 4,171 units, signaling confidence in automating variable production. Similar patterns are emerging globally.

Frequently Asked Questions

What is vision-guided automation in metal fabrication?

Vision-guided automation employs cameras and algorithms to provide robots with feedback on part location, orientation, and status. Typical uses are:

- Loading/unloading of CNCs

- Weld seam tracking in robotic welding

- Inspection of dimensions or surface features

Unlike traditional automation, robot programs adapt based on vision inputs rather than relying solely on fixture precision.

Does vision-guided robotic welding work for small batches?

Yes, if systems are built for rapid path generation and recipe control. In HMLV production, automation ROI depends on:

- Speed of new-part programming or adaptation

- Reduction in manual fitting and rework

- Gains in consistency and traceability

AI-enabled path and seam tracking minimize per-part engineering, enabling profitable automation for small runs and areas where manual welding is a bottleneck.

How do machine vision systems integrate with CNCs and welding equipment?

Integration involves two layers:

- Real-time control: Vision guidance exchanges data with robot controllers via industrial Ethernet or device-specific protocols, enabling real-time path correction.

- Information layer: Inspection and process results are shared with MES, QA, or analytics via OPC UA or similar standards.

Support for companion specifications reduces custom integration, improving long-term maintainability.

What are common deployment pitfalls in high-mix vision-guided automation?

Frequent issues include:

- Inadequate focus on lighting/optics, causing unstable part detection.

- Excessive reliance on vision for poor fixtures or upstream variability.

- Fragmented software stacks causing slow changeovers.

- Insufficient staff training, leading to dependency on a few experts.

Early trials with representative parts, standard cell designs, and systematic operator training help mitigate these issues.

How do workforce roles change with vision-guided automation?

Organizations tend to:

- Shift operator responsibilities to supervision and exception handling.

- Develop roles in robotics, vision engineering, and data analytics.

- Offer targeted training in safety, programming-by-demonstration, vision setup, and process optimization.

The goal is to redeploy expertise from repetitive tasks to higher-value activities, not to eliminate skilled positions.

Conclusions and Next Steps for Metal Fabricators

Vision-guided automation is becoming a viable strategy for high-mix metal fabrication, extending beyond high-volume OEMs. Combining robotics, machine vision, and standardized data interfaces enables:

- Faster, more adaptive scheduling across diverse product types

- Improved and more consistent weld/cut quality, and fewer defects

- Enhanced inspection and traceability for demanding applications

Plant and engineering leaders should consider:

- Mapping processes at the crossroads of ergonomic risk, quality needs, and product complexity (e.g., CNC tending, welding, inspection).

- Piloting vision-guided cells on representative high-mix workloads to validate changeover, quality, and integration.

- Aligning equipment purchases with OPC UA and key companion specifications for robots, vision, and CNCs.

- Developing a skills roadmap marrying robotics, vision, process, and data expertise-with clear progression for technical staff.

- Planning for scale by standardizing equipment, software, and integration across sites.

Viewing vision-guided automation as a strategic asset positions metalworking organizations to handle product variety, protect profitability, and compete on flexibility and quality in the next decade.