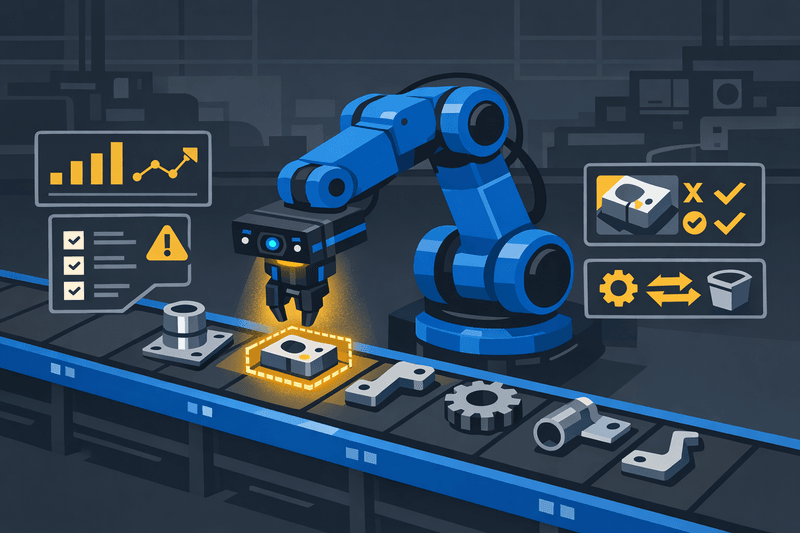

AI visual inspection systems achieve defect detection rates above 99%, compared to roughly 80% for human inspectors under ideal conditions - and that gap widens on night shifts, during repetitive tasks, and when part mix is high. For fabricators running dozens of part variants per day, that performance differential is no longer theoretical. It is a production-floor reality.

Vision-guided automation has moved well beyond niche pilots. Advances in AI-powered vision, adaptive grippers, edge computing, and data integration are converging to make high-mix, low-volume fabrication one of the most compelling deployment scenarios in industrial automation.

What Is Driving Adoption Now

Three forces are accelerating the shift from manual inspection to vision-guided production lines.

High-mix complexity without sacrifice. Modern AI vision systems can identify part variants, verify critical dimensions, and trigger changeover routines in seconds. According to a 2026 MDPI review covering more than 50 studies across automotive, aerospace, and general manufacturing, ML-powered vision systems achieve defect detection and classification accuracy exceeding 95%, with some systems reaching 98-100% in controlled environments. The same study found that 77% of implementations remain at pilot or prototype scale177% of implementations remain at pilot or prototype scale, underscoring the headroom for full-scale production rollout.

Regulatory and customer pressure for traceability. The global AI visual inspection system market was valued at $29.82 billion in 2025 and is projected to reach $85.24 billion by 2030, growing at a CAGR of 23.3%. Much of that growth stems from compliance mandates in automotive, aerospace, and medical device sectors, where end-to-end part traceability is no longer optional. Vision-enabled inspection data - lot numbers, defect codes, dimensional outputs - feeds directly into MES and ERP systems, enabling accurate BOMs, audit-ready records, and supplier scorecards.

Labor shortages and skilled-worker gaps. According to MIT research cited at FABTECH 2025, only 10% of machine tending operations are currently automated, despite tending being one of the most labor-intensive and injury-prone tasks on the fabrication floor. Vision-guided robots reduce operator exposure to grinding hazards, fumes, and repetitive strain while freeing personnel for higher-value exception handling and quality oversight.

Where Vision-Guided Automation Is Being Deployed

The cross-industry breadth of current deployments is notable. Each sector brings a distinct challenge, but the core value proposition - reduced changeover time, consistent quality across a wider part mix, and automated defect rejection - repeats across all of them.

- Automotive components: Vision systems identify randomly oriented castings and stampings on conveyor lines, guiding pick-and-place robots without dedicated fixtures. In the automotive sector, vision-guided assembly cells have demonstrated 97% consistency in part assembly while reducing cycle times, according to robotic guidance industry benchmarks. Major U.S. OEMs including General Motors, Ford, and Tesla have implemented comprehensive vision-guided automation across their production facilities.

- Aerospace fittings: AI vision monitors weld seam integrity, composite surface condition, and dimensional conformance on high-value, low-volume components. Tight AS9100 traceability requirements make integration of vision data into quality management systems essential - not optional.

- Electrical enclosures: Short-run enclosure fabrication - with variants in punched hole patterns, bend profiles, and hardware insertions - is a natural fit for vision-guided automation. Systems verify hole locations, check fastener presence, and confirm bend angles inline, eliminating end-of-line inspection bottlenecks.

- Architectural hardware (muntins, glazing components): Profile-heavy parts with tight finish tolerances are traditionally difficult to inspect manually at production speed. Vision-based surface inspection catches scratch patterns, coating voids, and dimensional deviations that operators routinely miss during high-throughput runs.

For an in-depth look at how vision systems accelerate changeover in job-shop environments, see earlier coverage: Vision-Guided Robotics Speed Changeovers in High-Mix Metal Fabrication.

The 2D vs. 3D Vision Decision

Selecting the right camera modality is one of the most consequential early decisions in any vision-guided automation project. The trade-offs are well defined:

{{component:comparison-2d-3d}}

Quality checks and inspection represent the largest application of 2D vision systems, accounting for over 51% of the 2D machine vision market, while 3D systems are essential wherever depth perception, complex geometry measurement, or bin-picking is required. For reflective metal surfaces - a persistent challenge in sheet metal and machined-part inspection - lighting strategy is as important as camera selection. Structured lighting, coaxial illumination, and dark-field configurations each eliminate different classes of glare and shadow artifacts; the correct choice depends on the specific surface finish and defect type being targeted.

Traceability as a Supply Chain Asset

Vision-guided systems do not merely inspect parts - they generate a continuous stream of structured quality data. Every inspection cycle generates data for traceability, process improvement, and compliance reporting, including defect type, location, lot association, and timestamp. When routed into MES and ERP systems, this data delivers tangible supply chain benefits:

- Reduced buffer stock: Inline defect rejection eliminates the need for large downstream inspection queues or safety stock to absorb quality variability.

- Improved forecasting accuracy: Real-time scrap and yield data feeds planning systems with actual production performance rather than historical estimates.

- Enhanced supplier accountability: Vision-verified incoming material inspection creates objective records for supplier scorecards and warranty cost analysis.

- Faster compliance reporting: Audit-ready traceability records are generated automatically, reducing the manual effort required for customer or regulatory audits.

This is why the most successful deployments treat vision integration with MES/ERP as a day-one requirement - not an afterthought. Retrofitting data architecture after hardware installation is the single most cited cause of stalled pilots.

Estimating Your ROI

Before committing to a full-cell deployment, production teams benefit from a structured payback estimate. The tool below allows plant managers and process engineers to model savings based on their own changeover frequency, scrap costs, and labor rates.

{{widget:roi-estimator}}

Industry research from Forrester found a 374% average three-year ROI from AI visual inspection deployments, with an average payback period of 7-8 months. Deloitte's 2025 financial analysis found that organizations implementing AI inspection at a single critical station achieve an average 31% reduction in total quality control costs within two years2an average 31% reduction in total quality control costs within two years. Most manufacturers reach positive ROI within 6-12 months through combined savings from reduced scrap, lower inspection labor costs, and decreased warranty exposure.

A Six-Step Path from Pilot to Production

{{component:steps-implementation}}

Two additional integration requirements merit attention:

PLC and CNC connectivity. Vision systems that cannot communicate directly with existing PLCs, CNCs, and robotics controllers create data silos and stalled lines. Verify protocol compatibility - OPC-UA, EtherNet/IP, PROFINET - before finalizing hardware selection. Traditional PLC control systems often cannot keep up with the needs of job shops trying to integrate advanced AI-vision technologies, making controller selection a critical integration decision.

Operator retraining. The workforce dimension is consistently underweighted in vision-guided automation projects. Operators on vision-guided lines transition from manual inspection to exception handling, model retraining oversight, and calibration maintenance. Manufacturers that pair automation rollouts with structured retraining programs realize faster payback and fewer unplanned production stops. For a broader look at how workforce adaptation is evolving alongside automation, see Vision-Guided Automation Reshapes High-Mix Metal Fabrication.

Key Takeaways

- Vision-guided automation is production-scale technology in 2025, not a pilot-only proposition - but 77% of ML-powered vision implementations still remain at prototype or pilot scale, giving early movers a structural advantage.

- The business case rests on three pillars: changeover time reduction, inline defect rejection, and structured traceability data that improves every downstream system it touches.

- Camera modality (2D vs. 3D), lighting strategy, and MES/ERP integration architecture must be resolved before hardware procurement - not after.

- Operator retraining is not a soft consideration; it is a hard dependency for realizing full payback.

- Start narrow: a single, well-defined high-mix cell with a clear baseline is the most reliable path to a scalable, self-funding automation roadmap.